BALANCING LEARNING AND ACCOUNTABILITY: A CURRICULUM DESIGN FRAMEWORK FOR THE TWO-LANE ASSESSMENT MODEL

The two-lane assessment model is gaining attention in response to growing demands for evidentiary assurance in the context of generative AI and digitally enabled education. The model separates secure assessment pathways (lane 1) from developmental learning pathways (lane 2), allowing institutions to support authentic learning processes while maintaining defensible evidence of student achievement. However, two-lane has largely been implemented as a retrofitted assessment intervention, due to factors such as cost and workload, rather than as a structural component of curriculum design. More recently, locating lane 1 assessment to the program level has been proposed as a more considered response, but this move introduces an unresolved curriculum design problem: if lane 1 accountability shifts to the program level, program learning outcomes (PLOs) must carry the operational burden that course learning outcomes (CLOs) previously bore, yet they are rarely designed with the specificity required to anchor valid, defensible, high-stakes assessment. This article argues that resolving this problem requires a shift in how constructive alignment (CA) is applied, locating its operational anchor at the program level and redesigning PLOs accordingly. In response, the paper proposes a curriculum design framework for CA within the two-lane model. By treating lane 1 and lane 2 as complementary design elements, the framework enables purposeful integration of developmental learning and certified achievement while maintaining coherence and credible accountability of learning in AI-mediated and online environments.

KEY WORDS: learning outcomes, assessment, constructive alignment, two-lane, artificial intelligence, online learning, higher education

1. INTRODUCTION

Generative artificial intelligence (gen-AI) has significantly disrupted assessment practices in higher education, prompting institutions to reconsider how student learning is evidenced and evaluated (Perkins et al., 2024). Concerns about academic integrity, authorship, and the provenance of student work have intensified as gen-AI systems can generate sophisticated academic artefacts that are difficult to distinguish from human-produced work (Cotton et al., 2024). At the same time, universities operate within increasingly stringent accountability frameworks that require demonstrable and defensible evidence of student achievement for accreditation, quality assurance, and public trust (Havnes & Prøitz, 2016). These converging pressures have renewed attention to assessment design and have led to the development of new architectures for structuring assessment in gen-AI-mediated environments in an attempt to balance support for student learning with institutional demands for secure evidence of achievement (Lodge et al., 2025).

These issues are particularly acute in online and digitally mediated education. Online learning environments expand access and flexibility but also introduce additional complexities for evidencing authorship and verifying student performance (Cramp et al., 2019; Gamage et al., 2020). When teaching, learning, and assessment occur through digital platforms, the boundaries between independent student work, collaborative activity, and gen-AI-supported production can become difficult to observe directly (Dawson et al., 2024). Traditional mechanisms for confirming authorship, such as in-person supervision, invigilation, or tacit familiarity with student work, are less readily available in online settings or are more complex and costly to implement (Holden et al., 2021; Lodge et al., 2025). As a result, institutions delivering online or hybrid education face heightened challenges in ensuring that assessment evidence is both valid and defensible across distributed learning environments. These conditions amplify the need for assessment architectures that can distinguish between developmental learning processes and secure certification of achievement within digitally mediated environments (Boud & Associates, 2010; Cotton et al., 2024).

Within this context, the two-lane assessment model has emerged in Australian higher education discussions about academic integrity and gen-AI (Liu & Bridgeman, 2023). The model proposes a structural separation between secure, certified assessment (lane 1) and developmental, learning-oriented assessment (lane 2). To date, the two-lane AI assessment model has largely been treated as an intervention within existing curricula rather than as a structural reconfiguration of curriculum design (Bridgeman et al., 2024). In practice, this has meant that institutions often attempt to retrofit two-lane practices onto existing course-level assessment structures (Corbin et al., 2025). This pattern reflects pragmatic constraints, as implementing secure assessment in every course is often infeasible due to cost, workload, and scalability limitations associated with proctoring, invigilation, or oral assessment (Lodge et al., 2025). More recently, locating lane 1 assessment at the program-level has been proposed as a more considered response (Bridgeman et al., 2024), drawing on constructive alignment (CA) (Biggs, 1996), the dominant curriculum design paradigm in higher education (Charlton & Newsham-West, 2022). However, this move introduces an unresolved curriculum design problem: program learning outcomes (PLOs), as conventionally written, are not designed with the specificity required to anchor the valid, defensible, high-stakes assessment that program-level lane 1 demands.

In response, we propose a curriculum design framework that shifts the operational anchor of CA from the course to the program level, beginning with the redesign of PLOs and rebuilding alignment downward from there. In doing so, the article positions the two-lane model not merely as a response to gen-AI, but as part of a broader reconsideration of how assessment systems operate in online and digitally mediated higher education environments. While framed through an Australian assessment model, the underlying problem addressed in this paper, how institutions can maintain evidentiary assurance while supporting learning in gen-AI-mediated environments, is shared across global higher education systems. As such, for international readers, the value of the two-lane model lies less in its local origin and more in the structural questions it raises: how institutions can separate developmental learning processes from certified evidence of achievement while maintaining coherent curriculum design. The framework proposed here addresses those questions directly, with a reconception of the role and design of PLOs as its foundational move, and a set of interconnected design artefacts that make that reconception operational across the full architecture of a program.

2. THE TWO-LANE ASSESSMENT MODEL

The rapid diffusion of gen-AI tools has prompted institutions globally to reconsider how assessment can simultaneously support learning and provide credible evidence of student achievement (Cotton et al., 2024; Dawson et al., 2024). These pressures are particularly visible in online and hybrid education, where learning interactions occur across distributed digital environments, the provenance of student work can be more difficult to observe, and generative AI-assisted academic production is widely accessible through online platforms (Gamage et al., 2020; Holden et al., 2021). In such contexts, institutions must reconcile two competing imperatives: enabling students to engage productively with digital tools while ensuring that assessment outcomes remain defensible to internal and external stakeholders and accountability measures (Havnes & Prøitz, 2016).

The two-lane assessment model has emerged in Australian higher education discussions as one response to the assessment challenges introduced by gen-AI and digitally mediated learning environments (Liu & Bridgeman, 2023). Originally developed at the University of Sydney, the model is now being explored or adopted by a number of institutions internationally (see, for example, Australian Catholic University, 2025; University of Auckland, 2026; University of Bath, 2026). Such adoption suggests the challenge it addresses is not unique to Australian contexts but reflects assessment pressures that are increasingly shared across higher education systems. In online and hybrid education contexts, where much student work is produced and submitted through digital platforms, the model provides a mechanism for balancing developmental learning with credible evidentiary assurance by separating assessment activities into two structurally complementary pathways (Cotton et al., 2024; Dawson et al., 2024; Liu & Bridgeman, 2023). Lane 1 focuses on secure assessment, where conditions are designed to provide robust evidence that students themselves have achieved the required learning outcomes. These tasks are typically high stakes and may be situated at the program level in order to support accreditation, quality assurance, and institutional accountability, or simply at the course level to assure learning outcomes have been attained. Lane 2, by contrast, supports developmental learning processes. It allows students to engage with feedback, collaboration, and to explore with gen-AI in ways that enhance learning without necessarily serving as the primary evidence for formal certification (Bridgeman et al., 2024).

3. EMERGING TENSIONS

While CA has long provided a coherent model for curriculum design (Biggs & Tang, 2011), the two-lane assessment model highlights a new and significant tension: course-level learning outcomes must now simultaneously support pedagogical alignment and provide defensible, program-level evidence. Yet in practice, the conditions under which two-lane is implemented mean these functions increasingly diverge (Lodge et al., 2025). Although the initial application of the two-lane model as a transitional intervention was effective (Bridgeman et al., 2024), moving beyond retrofitted course-level implementation raises deeper questions about how CA must be reconceptualized, and, in particular, the role that PLOs are now required to play.

3.1 Constructive Alignment in Contemporary Universities

In the contemporary university, CA (Biggs, 1996) typically operates with a locus that prioritises the course within the program (Charlton & Newsham-West, 2022), following a clear progression: PLOs align with course learning outcomes (CLOs), which in turn align with course rubrics, assessment tasks, and learning activities. Within this framework, CLOs function as the operational lynchpin of curriculum design, translating broad program expectations into specific, measurable, and observable outcomes that can be taught and assessed within individual courses (Biggs & Tang, 2011).

The strength of this model lies in its emphasis on transparency and intentionality. Students are made aware of what they are expected to learn and how their learning will be assessed, fostering deeper approaches to learning (Biggs et al., 2022). For educators, CA provides a systematic method for designing curricula in which teaching activities, assessment tasks, and learning outcomes work cohesively toward clearly articulated educational goals. Consequently, the model has been widely adopted internationally and is frequently embedded within institutional quality assurance and accreditation processes (Ruge et al, 2019). This widespread adoption has also extended the function of CA beyond its original pedagogical intent. While Biggs's initial emphasis on measurable outcomes referred to their observability, enabling educators to judge whether an outcome had been achieved (Biggs, 1996), CA now also serves institutional imperatives for defensible evidence of learning attainment, satisfying expectations from accreditation bodies, quality assurance agencies, and outcomes-focused funding models (Havnes & Prøitz, 2016; Banta & Blaich, 2010).

A more fundamental limitation of CA in the current context is its silence on questions of authorship, invigilation, and the provenance of student work. These were not part of CA's original conceptual frame, which was designed to align teaching and learning rather than to certify the conditions under which student work was produced. The rise of gen-AI has exposed the tension latent in CA's extension into institutional accountability: a framework conceived for pedagogical alignment is now being asked to underwrite evidential security in conditions it was never designed to address. This does not render CA obsolete, but it does mean that its conceptual frame must be extended. The two-lane model makes that extension necessary, and the framework proposed in this paper attempts to provide it.

3.2 How the Two-Lane Model Disrupts Alignment

The two-lane model is the immediate catalyst for this necessary extension of CA's conceptual frame. By separating secure certification from developmental learning, its underlying logic has led proponents to advocate for lane 1 assessment being positioned at the program level and lane 2 activities being situated within courses (Liu & Bridgeman, 2023; Bridgeman et al., 2024). While the two-lane model does not explicitly prescribe where formative or summative assessment must occur, this advocacy reflects a combination of pedagogical and practical considerations that make program-level lane 1 a compelling, if not universal response. Additionally, even critiques of the model that advocate less binary approaches frequently retain the assumption that some form of secure “no-AI” assessment remains anchored at the program level (Guy, 2025).

Fundamentally, the two-lane model exposes a tension in the dual role historically served by CLOs: learning-oriented development and accountability-oriented outcomes (Havnes & Prøitz, 2016). In traditional CA, CLOs translate broad program expectations into observable, measurable course-level outcomes, providing both student guidance and defensible evidence for institutional accountability (Biggs, 1996; Biggs & Tang, 2011; Charlton & Newsham-West, 2022). Yet, when the two-lane model is retrofitted to existing programs, logistical constraints, such as cost and workload (Lodge et al., 2025), often limit lane 1 assessments to certain points within the program rather than every course. Therefore, under the current two-lane conditions, CLOs may remain pedagogically meaningful, serving as developmental signposts, feedback anchors, and guides for student learning but no longer independently generate defensible evidence for accountability purposes (Corbin et al., 2025; Dawson et al., 2024).

This shift carries significant implications for both program coherence and student progression. Lane 2 assessments scaffold learning and support iterative feedback, helping students internalize program-level expectations. However, without deliberately sequenced lane 1 assessments, students may progress through courses without producing sufficient evidence of capability, and feedback cycles may fail to feed forward effectively (Havnes & Prøitz, 2016; Charlton & Newsham-West, 2022). Ultimately, post hoc implementations of two-lane risk creating “constructive coherence”: curricula that appear internally consistent but are decoupled from cumulative program-level evidence. Students may develop knowledge and skills yet lack valid, auditable evidence demonstrating attainment of program-level outcomes.

As lane 2 activities alone cannot generate validated evidence of achievement, the responsibility for defensible assessment rests on strategically placed lane 1 tasks, thereby relocating the operational centre of CA to the program level. This creates a tension between what is assessable and what is accountable: CLOs retain pedagogical specificity but are no longer institutionally defensible, while PLOs carry accountability but, in the absence of curriculum redesign, remain too diffuse to be validly evidenced through the pragmatic but ad hoc placement of lane 1 tasks. Bridgeman et al. (2024) advocate program-level assessment on pedagogical grounds, citing benefits including holistic design, coherent student development, and improved feedback utility, and in doing so align with a broader and growing trend in assessment scholarship. However, in moving to program-level lane 1 assessment, a fundamental gap in curriculum design thinking is exposed. When accountability shifts from the course to the program level, PLOs must now assume the operational burden that CLOs previously held: they must be specific, measurable, and observable enough to anchor valid, defensible, high-stakes assessment. Yet PLOs, as conventionally written, tend to be broad and aspirational, appropriate as descriptions of graduate capability but insufficiently granular to serve as the direct basis for reliable judgment in lane 1 assessment (Charlton and Newsham-West, 2022). This is the curriculum design problem the two-lane model has not yet solved, and it is the problem this paper addresses.

4. TOWARD EFFECTIVE CONSTRUCTIVE ALIGNMENT IN A TWO-LANE MODEL

The tension between learning-oriented and accountability-oriented outcomes in the two-lane assessment model requires reconsideration of how CA is enacted in curriculum design. While the two-lane model is relevant across multiple teaching contexts, it is particularly significant for online and digitally delivered programs, where assessment evidence is often distributed across platforms, learning interactions are asynchronous, and institutions require defensible mechanisms for demonstrating student achievement at scale. In such contexts, the two-lane model should not be viewed as a simple add-on or final checkpoint for assessment. Rather, it requires a program-wide reconceptualization of the alignment between outcomes, assessment, and learning. Because evidentiary assurance can no longer be fully anchored at the course level alone, alignment must shift to program-level structures, with PLOs reconceived to perform the operational role that CLOs previously held: providing the specific, measurable, and observable statements of capability that anchor valid, high-stakes assessment.

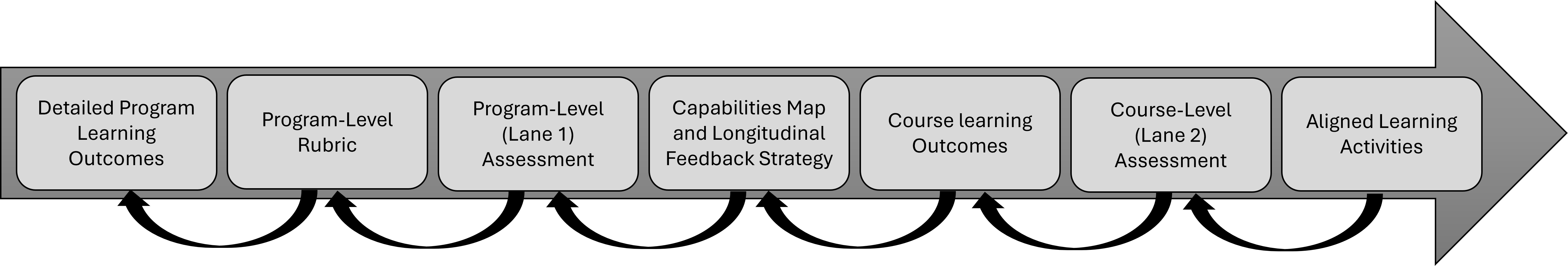

To operationalize CA within a two-lane assessment architecture, we propose a curriculum design framework that links program-level design decisions with course-level teaching and assessment (Fig. 1). The framework follows a design logic that begins where the problem is located: with the redesign of detailed PLOs, and progresses through the development of a shared program-level rubric, the design of secure program-level (lane 1) assessment, the creation of a capabilities map and longitudinal feedback strategy, the reconceptualization of CLOs, the design of developmental course-level (lane 2) assessments, and finally the alignment of learning activities that support students' progression across the program. Together, these elements establish an assessment architecture that integrates evidentiary assurance with developmental learning processes.

FIG. 1: Curriculum design framework for implementing constructive alignment within a two-lane assessment architecture. The rightward arrow represents the sequential design logic linking program learning outcomes, program-level assessment (lane 1), course-level outcomes and assessments (lane 2), and aligned learning activities. The leftward arrows indicate the iterative nature of curriculum design, where earlier stages are revisited as programs refine outcomes, assessment strategies, and learning activities over time.

Presenting the framework in this order highlights how evidentiary assurance (lane 1) and developmental learning processes (lane 2) must be deliberately coordinated across program and course levels. The stages are presented sequentially to clarify the underlying design logic; however, in practice, curriculum development is iterative rather than strictly linear. Programs frequently revisit earlier stages to refine outcomes, recalibrate assessment design, and adjust learning activities based on student progression data, program review, and evaluation. The following sections explain each of these design stages in detail.

4.1 Detailed Program Learning Outcomes

The shift toward evidentiary assessment against the PLOs requires a reconsideration of their granularity and articulation. Traditionally, programs have articulated a small number of broad PLOs that encapsulate graduate capabilities in general terms. However, such high-level statements are rarely specific or detailed enough to meaningfully anchor the rigorous accountability demands of lane 1. Expanding both the number and specificity of PLOs provides the granularity required to support measurable, observable outcomes that are capable of being validly assessed across diverse courses. These enhanced PLOs should move beyond generic aspirations to define assessable capabilities that reflect the complex, integrative nature of graduate attributes.

4.2 A Program-Level Rubric as the Anchor of Alignment

Once PLOs have been redesigned with the specificity required for lane 1 assessment, a shared criterion language, such as a rubric that operates at the program level, is needed to operationalize them consistently. Innovating on the notion of course-level rubrics (Moss & Ruthnappulige, 2025), it comprises capability descriptors that deconstruct the PLOs at the levels and standards that will be assessed, functioning both as a developmental scaffold and a standard-setting mechanism. This artefact provides students and staff with a consistent vocabulary for judging performance, ensuring that standards are applied consistently regardless of the specific course or assessor. The transparency and validity that the program-level rubric provides become vital in lane 1 assessment, where defensibility and comparability are key. Building shared criterion language to construct such a rubric requires significant academic collaboration and professional development to ensure interpretive consistency across the program, thereby articulating often tacit institutional program knowledge.

4.3 Program-Level (Lane 1) Assessment: Secure, Certified, and Integrative

Lane 1 assessments, secure, certified assessments typically located at the program level, form the evidentiary backbone of institutional assurance. These program-level assessments (Charlton & Newsham-West, 2022) aim to evaluate students' ability to integrate knowledge, demonstrate transferable skills, and perform authentically in complex scenarios. The design of such assessments often includes capstone projects, portfolios, comprehensive exams, or simulated professional tasks (Boud & Associates, 2010). Their alignment to the shared criterion language and PLOs ensures consistency and validity. Importantly, these tasks must also meet high standards of reliability and security, particularly in light of increasing concerns around gen-AI and contract cheating. Task design, proctoring methods, and clear integrity protocols all contribute to the evidential strength of lane 1 assessments. While the two-lane model does not require lane 1 to be located at the program level, doing so offers strategic advantages in managing academic workload and aligning assessment with accreditation and reporting cycles (Bridgeman et al., 2024) as well as ensuring effective use of secure methods such as proctoring for online students (Cramp et al., 2019).

4.4 The Capabilities Map and Longitudinal Feedback Strategy

To ensure that students construct knowledge over time in alignment with enhanced PLOs, a comprehensive capabilities map and coordinated feedback strategy play a central role in supporting student development toward lane 1 assessment. The capabilities map provides a representation of how specific program-level skills and sub-skills are introduced, taught, and developed across the program. It supports curriculum planning, enabling teaching teams to identify redundancy or gaps in learning progression. A longitudinal feedback strategy complements this map by coordinating feedback across units and semesters. Rather than being transactional or confined to individual courses, feedback becomes cumulative and developmental. This helps emphasise to students that feedback literacy and continuity are vital for meaningful learning (Carless and Boud, 2018). Designing opportunities for peer feedback, self-assessment, and repeated engagement with shared criteria across courses enables students to track progress over time, develop self-regulation, and build toward the standards required for lane 1 assessments, essential formative processes (Nicol and Macfarlane-Dick, 2006), which are central to this longitudinal approach.

4.5 Course Learning Outcomes

In this model, CLOs retain importance but serve a different function. This repositioning follows directly from the redesign of PLOs: as PLOs take on the operational specificity and accountability function previously carried by CLOs, CLOs are freed to fulfil their original pedagogical purpose. They shift from being endpoints of certified accountable attainment to operating as developmental signposts of learning—scaffolds that guide students toward program-level capabilities, much like their original intent (Biggs, 1996). While CLOs still structure course content and align with formative and course-specific summative tasks, they no longer carry the institutional burden of certification. Their language should reflect this repositioning: CLOs must remain measurable and alignable, but without implying formal evidentiary or institutional assurance. This enables CLOs to support localized, contextualized learning while maintaining their role within the broader capabilities map. Consequently, CLOs transition from being the “final measure” of student achievement to becoming essential pedagogical tools embedded within program-wide development.

4.6 Course-Level (Lane 2) Assessment: Context-Specific and Developmental

Designing lane 2 assessments that generate genuine student engagement requires deliberate application of CA, making the developmental logic of the program visible to students. Rather than assuming that low-stakes conditions will naturally produce authentic participation, we must use transparent course structures to show how each lane 2 task connects to program-level expectations. This shifts the locus of responsibility: when the purpose of lane 2 is explicit, students can make an informed choice about their engagement (Moss & Ruthnappulige, 2025). The decision not to engage remains theirs, but it can no longer be justified by a lack of clarity about the task's value.

Lane 2 assessments are designed to be contextualized, iterative, and primarily developmental, supporting students as they build the knowledge, skills, and evaluative judgment required for lane 1 assessments. These low-stakes course-level tasks allow students to apply learning, receive feedback, and rehearse the thinking and performance expected at the program level, while also providing opportunities for reflection and self-regulation (Sadler et al., 2022; Nicol & Macfarlane-Dick, 2006). When deliberately aligned with lane 1, course assessments can mirror or scaffold complex program-level tasks, using shared criterion language, to familiarize students with the standards their lane 1 work will eventually be judged against. By doing so, lane 2 functions not only as a site of learning but as a bridge to the cumulative, secure judgments represented by lane 1. Through this connection, students develop the metacognitive skills and evaluative judgment needed to perform under the rigorous conditions of lane 1.

In lane 2, generative AI tools can be incorporated safely as part of the feedback ecosystem. When designed intentionally (Corbin et al., 2025), AI can support rehearsal, self-assessment, and automated feedback on drafts, enhancing feedback literacy and readiness for lane 1. The two-lane architecture ensures that experimentation with AI remains low stakes, while secure certification and high-stakes judgments are preserved in lane 1, maintaining a clear separation of risk while enabling meaningful learning engagement.

4.7 Aligned Learning Activities

Learning activities continue to play a central role in CA, but their design must now balance local course needs with program-level trajectories. Activities should support students in achieving the CLOs while also preparing them for the demands of lane 1 assessments. This dual alignment necessitates a cultural shift in the academic community. Rather than viewing courses as self-contained “silos,” educators must see their teaching as a shared contribution to program-level attainment. Activities that build evaluative judgment, encourage application of shared criterion language, and foster critical reflection can help students navigate the complex learning expectations that unfold over time. By explicitly linking learning activities to both course-level and program-level outcomes, educators ensure that engagement is purposeful and progressive. This shift in perspective is as important as any structural design change, and its implications for faculty development are taken up in the following section.

5. CHALLENGES FOR IMPLEMENTATION

The proposed framework for curriculum design using a two-lane model offers a pathway toward balancing assurance and learning. However, effective implementation of this curriculum design framework to the two-lane assessment paradigm presents a series of complex, systemic challenges that institutions must engage with critically and proactively. While the model offers significant opportunities for secure, distributed, and program-level assurance, these benefits are not automatic, nor is it one-size-fits-all. Without deliberate design interventions and sustained institutional support, the two-lane model risks creating unintended pedagogical, motivational, and ethical consequences for both learners and educators. In this way, if institutions opt to adopt the two-lane model, they must balance the risks and rewards it offers. This section outlines key areas where implementation challenges are likely to arise, not to propose definitive universal solutions but to surface issues that must be actively and discursively engaged with in both policy and practice.

5.1 Lane 2 Participation

One of the most immediate risks in the two-lane model is the potential marginalization of lane 2 assessment activities. If students perceive these assessments as low stakes or disconnected from certification, they may deprioritize them in favor of competing demands or workloads. And for those who do engage, the absence of secure conditions means that gen-AI can be used to complete lane 2 tasks with minimal effort, producing apparently successful outcomes that do not reflect genuine learning. Both of these behaviors, disengagement and AI exploitation, are precisely what prompted the development of the two-lane model in the first place: they are the reasons unsecured assessment can no longer reliably serve as evidence of authentic student achievement and why lane 1 certification exists.

The two-lane model clearly recognizes these risks at the summative level, which is why lane 1 exists. However, the question of how to secure genuine student engagement at the formative level has received less attention. Existing advocacy for the model, including that of Liu and colleagues, tends to assume that lane 2 participation will follow naturally from the model's structure. That assumption warrants closer examination and represents an area where further research and design attention is needed. What is clear is that institutions cannot simply assume lane 2 engagement will happen; they must be deliberate in how they design for it, and the framework proposed in this paper begins to address that through the explicit alignment of lane 2 tasks to program-level expectations and deconstructed learning journeys.

Institutions face the challenge of preserving the pedagogical value of lane 2 without reverting to summative grading or coercive participation strategies. If students fail to engage meaningfully, the scaffolding required for success in lane 1 assessments is significantly weakened, yet heavy-handed responses risk undermining the open, low-stakes character that makes lane 2 valuable in the first place. Structural changes to lane 2 assessment practices are therefore required (Corbin et al., 2025).

5.2 Deferring High-Stakes Assessment

A key challenge in implementing the two-lane model is the deferral and concentration of high-stakes assessment (lane 1), particularly toward the end of a program or year levels within a program to ensure the meeting of PLOs. If students reach certification points underprepared, having not identified or addressed learning gaps through lane 2, they may encounter sudden failure with limited opportunity for recovery. This risk is particularly acute for online learners, first-in-family students, and other equity-seeking groups for whom early success and accurate self-assessment are critical to academic identity and persistence (Shaikh & Asif, 2022). Where and how judgment occurs can either reinforce or alleviate educational inequities.

Program-level assessments also pose practical challenges. They often sample large domains of knowledge, sometimes across multiple courses, making it difficult for markers to provide specific, actionable feedback. As Boud and Molloy (2012) note, learners typically require multiple cycles of feedback to master complex concepts, but high-stakes program-level assessments reduce both frequency and specificity, limiting opportunities for learning to feed forward. Without prior scaffolding, integrative tasks may feel sudden, overwhelming, and unrepresentative of students' actual capabilities, creating bottlenecks that disproportionately amplify pressure and fairness concerns.

These practical challenges also raise deeper design questions that institutions must address explicitly. How many lane 1 tasks are needed to reliably assure each PLO at the appropriate level (e.g., in the Australian Qualifications Framework or comparable)? Are all PLOs assessed with equal weight, depth, and clarity? And what are the consequences if a single lane 1 assessment is highly integrative or cumulative across multiple years, potentially carrying irreversible stakes for student progression?

To mitigate these challenges, institutions should adopt a developmental, distributed model of assessment, embedding multiple lane 1 checkpoints across the program and integrating them with lane 2 activities. Staged milestones, low-weight summative tasks, or hybrid assessments, such as capstone projects that incorporate curated lane 2 evidence, can provide ongoing insight into student progression, reinforce feedback literacy, and support self-regulated learning while maintaining the evidentiary integrity of high-stakes certification. By spreading assessment across multiple points and sequencing it developmentally, the two-lane model has the potential to balance rigor, flexibility, and equity, ensuring that certification reflects learning that has been meaningfully developed rather than arbitrarily judged.

5.3 AI-Generated Work in Lane 2

As noted above, the low-stakes character of lane 2 creates conditions that can be exploited through gen-AI in ways that undermine rather than support learning. The use of gen-AI for lane 2 assessments may result in students overusing tools for formative tasks. While this behavior does not necessarily constitute academic integrity breaches, since lane 2 assessments are not certified, it poses a different kind of threat: it undermines the learning process itself. If students submit AI-generated work and receive feedback on that work, the feedback no longer reflects their own development. As a result, they may receive falsely positive signals and reach lane 1 assessments unprepared.

This phenomenon introduces a paradox. Lane 2 is intended as a safe space and is low-risk, formative, and developmental (Steel, 2024). Yet it is precisely this low-risk environment that can be exploited in ways that reduce its pedagogical function. Addressing this challenge does not mean restricting AI use. Instead, it calls for a stronger emphasis on feedback and critical AI literacies as foundational capabilities. Students must be taught how to use AI constructively to support, rather than replace, their learning. Frequent, timely feedback checkpoints, particularly those that involve dialogue, reflection, or in-person engagement, can help ensure that learners track their genuine progress rather than the illusion of it.

5.4 Faculty Development and Institutional Support

From a faculty perspective, one of the most significant challenges in implementing a two-lane assessment model is the shift it requires in academic culture and professional practice. Shifting from a course-centric mindset toward a program-level logic demands a broader perspective: educators must see their teaching and assessment practices as part of an integrated learning journey across a degree program (Hillier, 2024). In some institutions this will require professional learning to develop a shared understanding of the two-lane model and the role of secure, distributed assessment in evidencing PLOs. Staff must become fluent in using shared criterion language, interpreting program-level rubrics, and contributing to capabilities mapping. Collaborative curriculum design becomes essential, enabling cross-course coherence and the scaffolding of learning over time. Feedback literacy also needs strengthening so that instructors can provide feedback that helps students reflect on progress across the program, not just within a single course. To support this shift, institutions must allocate time and resources beyond normal teaching loads. Recognizing the labor involved in implementing the two-lane model requires a coordinated effort, and this work must be visible in workload models to support the required structural and cultural change.

6. CONCLUSION

The introduction of a two-lane assessment model represents more than the addition of secure evidentiary assessment: it requires institutions to reconsider how CA operates across the full architecture of a program. While the foundational principles of CA, intentionality, transparency, and outcome-oriented design, remain valuable, the structural shift inherent in the two-lane model challenges the traditional course-centric application of alignment. When certification of learning is increasingly concentrated in secure, program-level assessment, relying on CLOs as the primary anchor for evidentiary assurance becomes insufficient. The burden passes to PLOs, yet PLOs as conventionally written are not designed to carry it. This is the curriculum design problem the two-lane model surfaces, and it is the problem this paper has addressed.

This article has argued that addressing this shift requires a restructuring of alignment across program-level assessment systems rather than the retrofitting of new assessment models into existing course structures due to pragmatic constraints. The proposed curriculum design framework demonstrates how CA can be operationalized within a two-lane assessment architecture, beginning with the redesign of PLOs and extending through shared program-level criterion language, capabilities mapping, and longitudinal feedback strategies across multiple courses. In doing so, the framework relocates the operational anchor of alignment from the course to the program level, providing the design specificity that program-level lane 1 assessment requires but has lacked.

Although the two-lane model has emerged within Australian higher education discussions about gen-AI, the challenges it addresses are not unique to that context. As online and digitally mediated learning environments expand internationally, institutions increasingly face similar tensions between supporting developmental learning processes and producing defensible evidence of achievement. The framework presented in this article, therefore, offers a substantive extension of CA for AI-mediated assessment contexts, with the reconception of the role and design of PLOs as its foundational move, and a set of interconnected design artefacts that make that reconception operational across the full architecture of a program. Without a reconception of what PLOs are for and how they must be designed, program-level two-lane implementations may achieve the structural separation of lane 1 and lane 2 while failing to resolve the deeper curriculum design question: What exactly is being certified, and against what standard?

REFERENCES

Australian Catholic University (2025). Two-lane assessment approach. Australian Catholic University. https://staff.acu.edu.au/our_university/centre-for-education-and-innovation/curriculum-design-and-quality-assurance/assessment-design-and-feedback/two-lane-assessment-approach

Banta, T. W., & Blaich, C. (2010). Closing the assessment loop. Change: The Magazine of Higher Learning, 43(1), 22–27.

Biggs, J. (1996). Enhancing teaching through constructive alignment. Higher Education, 32(3), 347–364.

Biggs, J., Tang, C., & Kennedy, G. (2022). Teaching for quality learning at university (5th ed.). McGraw-Hill Education.

Biggs, J., & Tang, C. (2011). Teaching for quality learning at university: What the student does (4th ed.). Open University Press.

Boud, D., & Associates. (2010). Assessment 2020: Seven propositions for assessment reform in higher education. Australian Learning and Teaching Council.

Boud, D., & Molloy, E. (2012). Rethinking models of feedback for learning: The challenge of design. Assessment & Evaluation in Higher Education, 38(6), 698–712.

Boud, D., & Molloy, E. (2013). Feedback in higher and professional education: Understanding it and doing it well. Routledge.

Bridgeman, A., Liu, D., & Weeks, R. (2024, September 12). Program level assessment design and the two-lane approach. The University of Sydney. https://educational-innovation.sydney.edu.au/teaching@sydney/program-level-assessment-two-lane/

Carless, D., & Boud, D. (2018). The development of student feedback literacy: Enabling uptake of feedback. Assessment & Evaluation in Higher Education, 43(8), 1315–1325.

Charlton, N., & Newsham-West, R. (2022). Program-level assessment planning in Australia: The considerations and practices of university academics. Assessment & Evaluation in Higher Education, 48(6), 820–833.

Corbin, T., Dawson, P., & Liu, D. (2025). Talk is cheap: Why structural assessment changes are needed for a time of GenAI. Assessment & Evaluation in Higher Education, 50(7), 1087–1097.

Cotton, D. R. E., Cotton, P. A., & Shipway, J. R. (2024). Chatting and cheating: Ensuring academic integrity in the era of ChatGPT. Innovations in Education and Teaching International, 61(2), 228–239.

Cramp, J., Medlin, J. F., Lake, P., & Sharp, C. (2019). Lessons learned from implementing remotely invigilated online exams. Journal of University Teaching and Learning Practice, 16(1).

Dawson, P., Bearman, M., Dollinger, M., & Boud, D. (2024). Validity matters more than cheating. Assessment & Evaluation in Higher Education, 49(7), 1005–1016.

Gamage, K. A. A., de Silva, E. K., & Gunawardhana, N. (2020). Online delivery and assessment during COVID-19: Safeguarding academic integrity. Education Sciences, 10(11), 301.

Guy, C. (2025). The two-lane road to hell is paved with good intentions: Why an all-or-none approach to generative AI, integrity, and assessment is insupportable. Higher Education Research & Development, 44(8), 2151–2158.

Havnes, A., & Prøitz, T. S. (2016). Why use learning outcomes in higher education? Exploring the grounds for academic resistance and reclaiming the value of unexpected learning. Educational Assessment, Evaluation and Accountability, 28(3), 205–223.

Hillier, M. (2024). Programmatic assessment. Macquarie University. https://ishare.mq.edu.au/prod/file/56bad8d6-6f58-4830-ae97-67a7f7244706/1/MQ_2023_Programmatic_Assessment_Quick_Guide_Macquarie_University.pdf

Holden, O. L., Norris, M. E., & Kuhlmeier, V. A. (2021). Academic integrity in online assessment: A research review. Frontiers in Education, 6, 639814.

Lodge, J. M., Bearman, M., Dawson, P., Gniel, H., Harper, R., Liu, D., McLean, J., Ucnik, L., & Associates (2025). Enacting assessment reform in a time of artificial intelligence. Tertiary Education Quality and Standards Agency, Australian Government. https://www.teqsa.gov.au/sites/default/files/2025-09/enacting-assessment-reform-in-a-time-of-artificial-intelligence.pdf

Liu, D., & Bridgeman, A. (2023, July 12). What to do about assessments if we can't out-design or out-run AI? The University of Sydney. https://educational-innovation.sydney.edu.au/teaching@sydney/what-to-do-about-assessments-if-we-cant-out-design-or-out-run-ai/

Moss, P., & Ruthnappulige, S. (2025). Constructive alignment: A journey, not a destination. In S. Barker, S. Kelly, R. McInnes, & T. Johnson (Eds.), Future-focused: Educating in an era of continuous change—Proceedings of the 2025 Australasian Society for Computers in Learning in Tertiary Education (pp. 5–6). ASCILITE.

Nicol, D. J., & Macfarlane-Dick, D. (2006). Formative assessment and self-regulated learning: A model and seven principles of good feedback practice. Studies in Higher Education, 31(2), 199–218.

Perkins, M., Furze, L., Roe, J., & MacVaugh, J. (2024). The artificial intelligence assessment scale (AIAS): A framework for ethical integration of generative AI in educational assessment. Journal of University Teaching and Learning Practice, 21(06).

Ruge, G., Tokede, O., & Tivendale, L. (2019). Implementing constructive alignment in higher education: Cross-institutional perspectives from Australia. Higher Education Research & Development, 38(4), 833–848.

Sadler, I., Reimann, N., & Sambell, K. (2022). Feedforward practices: A systematic review of the literature. Assessment & Evaluation in Higher Education, 48(3), 305–320.

Shaikh, U. U., & Asif, Z. (2022). Persistence and dropout in higher online education: Review and categorization of factors. Frontiers in Psychology, 13, 902070.

Steel, A. (2024). 2 lanes or 6 lanes? It depends on what you are driving: Use of AI in Assessment. University of New South Wales. https://www.education.unsw.edu.au/news-events/news/two-six-lanes-ai-assessment

University of Auckland. (2026). Two-lane approach to assessment. TeachWell Digital. https://teachwell.auckland.ac.nz/assessment/two-lane-approach-to-assessment/

University of Bath. (2026). The two-lane approach to genAI assessment categorisation. Learning and Teaching Hub. https://teachinghub.bath.ac.uk/guide/genai-and-assessment/

Comments

Show All Comments